The rapid rise of AI agents, autonomous software entities capable of reasoning, planning, and executing complex tasks, has created a fundamental paradigm shift in how we interact with data. We have moved from a world of static interaction, where known users followed rigid application paths, to a generative world where agents dynamically generate code, like SQL, to query enterprise platforms on the fly.

This shift has brought us to the agentic breaking point.

The problem: Traditional security can't scale to AI

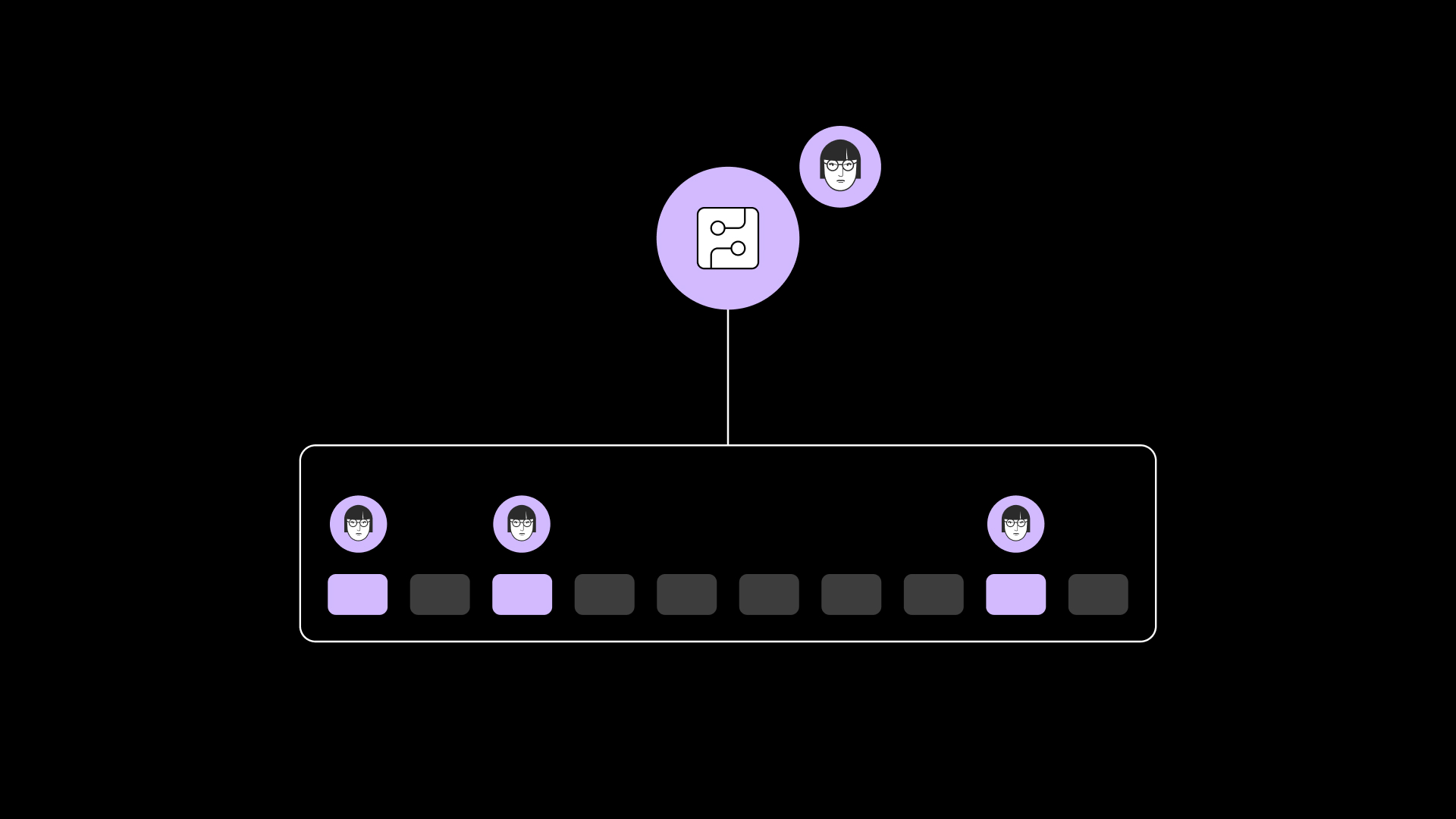

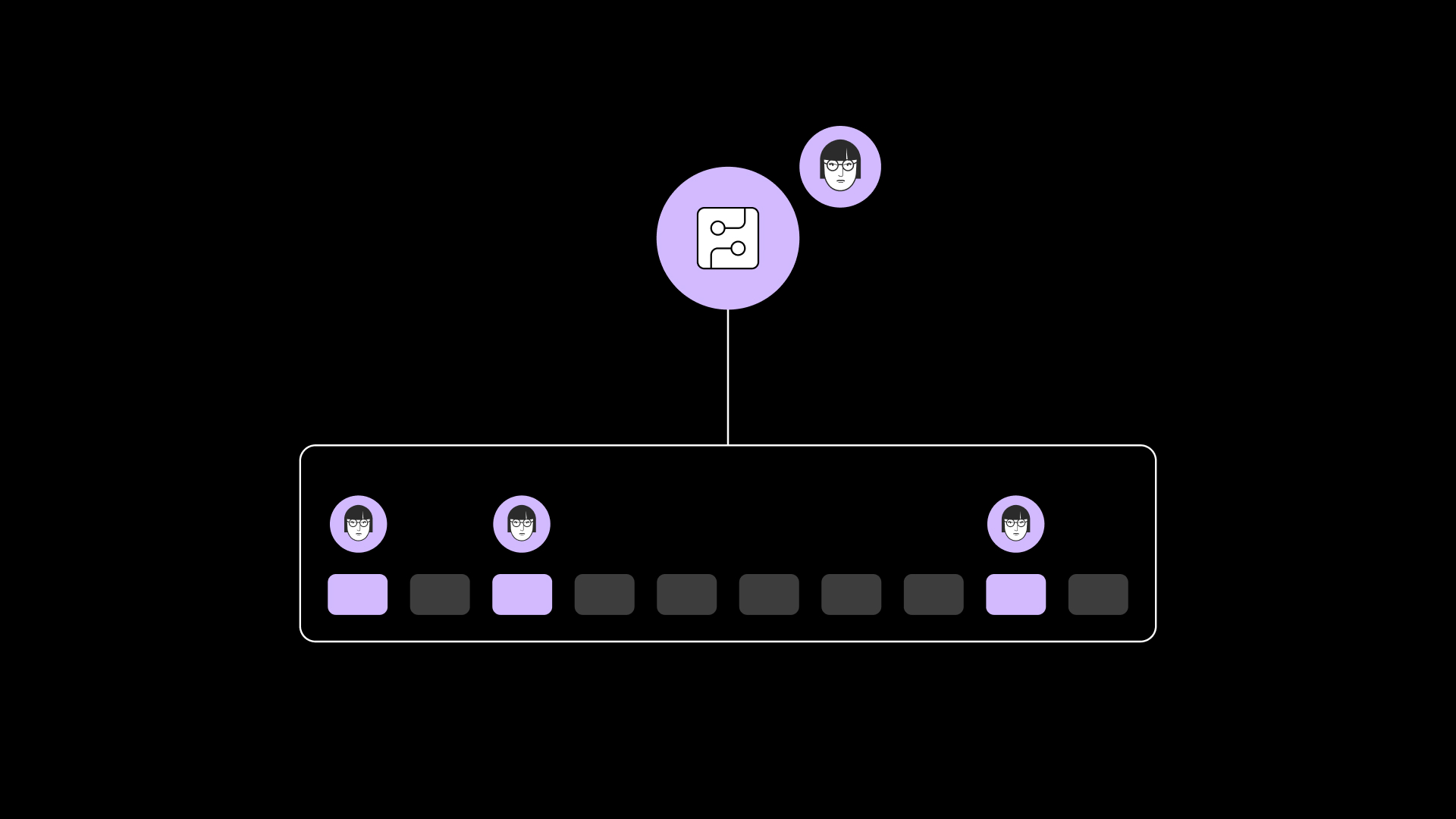

In a traditional environment, a small group of users has direct database access to create dashboards for everyone else. But AI agents make it so any user can ask any question of any data platform. Managing what everyone can “do” everywhere, just in case they ask a question, is a daunting and dangerous task for security teams.

Current authorization frameworks, like OAuth 2.0, were built for a “human-to-app” world and fail in an agent-driven environment for several reasons:

- Account Sprawl: You are forced to provision and manage every single human user in every single underlying data system. You aren’t just defining access policies; you’re manually implementing them everywhere.

- Exposure Risk: By giving every user an active account across the entire stack, you’ve massively expanded your attack surface. The amount of permutations of data access to manage will be daunting, creating an environment ripe for access management errors, and so slow and rigid it is impossible to change or manage.

- Scope Explosion at the Data Plane: OAuth scopes are too coarse for databases; attempting to map thousands of granular data permissions to a protocol designed for broad SaaS actions is brittle and unmanageable.

- Rights Inflation: Consider an ACCOUNTADMIN. If they use an agent via OAuth, that non-deterministic agent inherits “god-mode” privileges. A simple hallucination could lead to unintended, system-wide changes that go far beyond answering a data question.

- Muddy Audit Trails: Query logs will show the user ran the query, when in reality, the agent ran it on behalf of the user. There is no way to breadcrumb back to what actually occurred.

The Immuta solution: Policy as the gatekeeper

At Immuta, we believe access can no longer be the gatekeeper to data—policy must be. Our solution treats AI agents as first-class identities with their own attributes, intent, and audit trails.

Instead of an agent “pretending” to be a human through shared credentials, Immuta vends a temporary, session-exclusive role (or group) to the agent that represents exactly what that specific human is allowed to see as scalably defined in Immuta (using ABAC), down to the table, row, and column level. These policies are written once in Immuta, yet are enforced in all your data platforms.

Technical Deep Dive: The On-Behalf-Of (OBO) Workflow

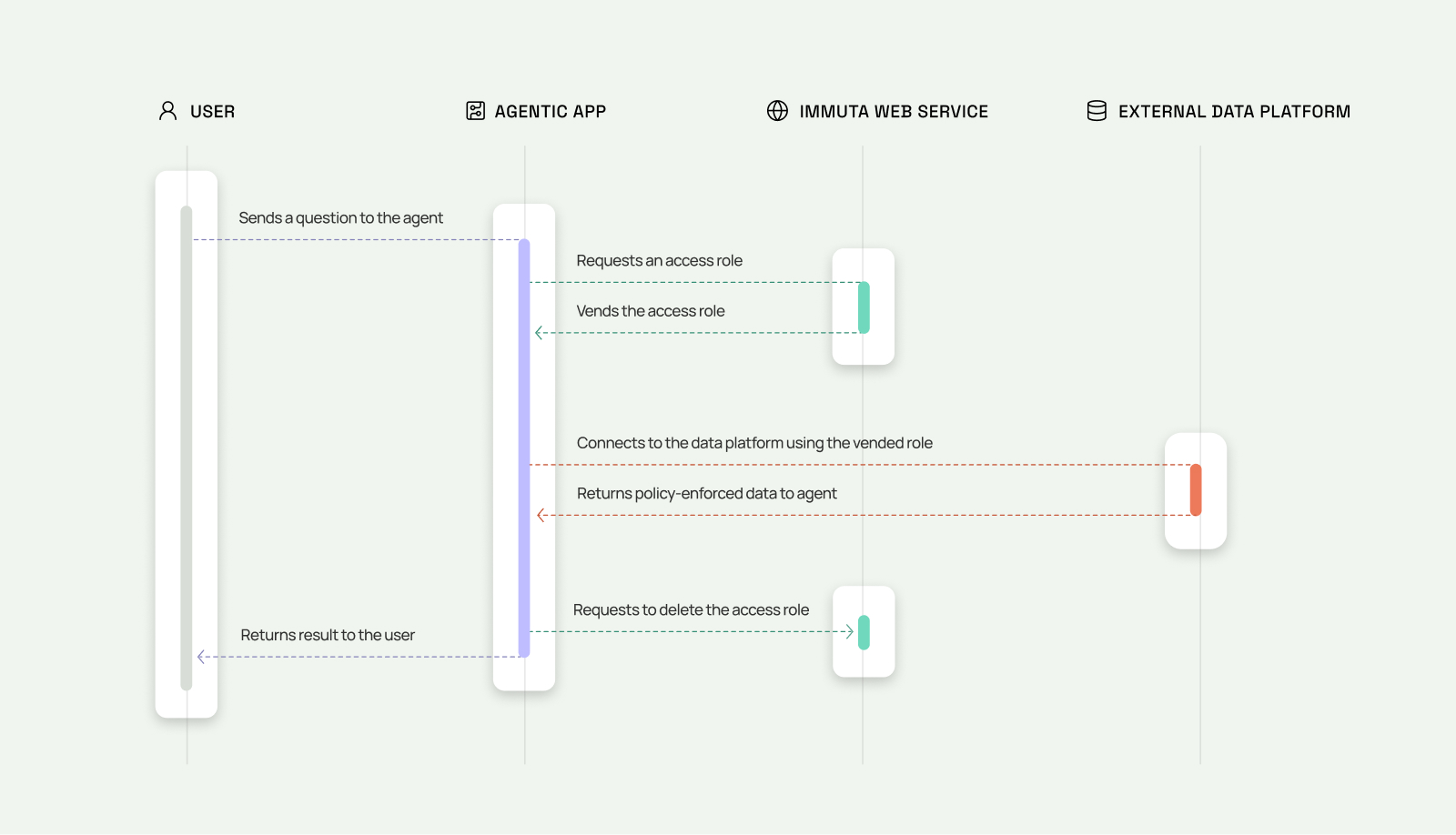

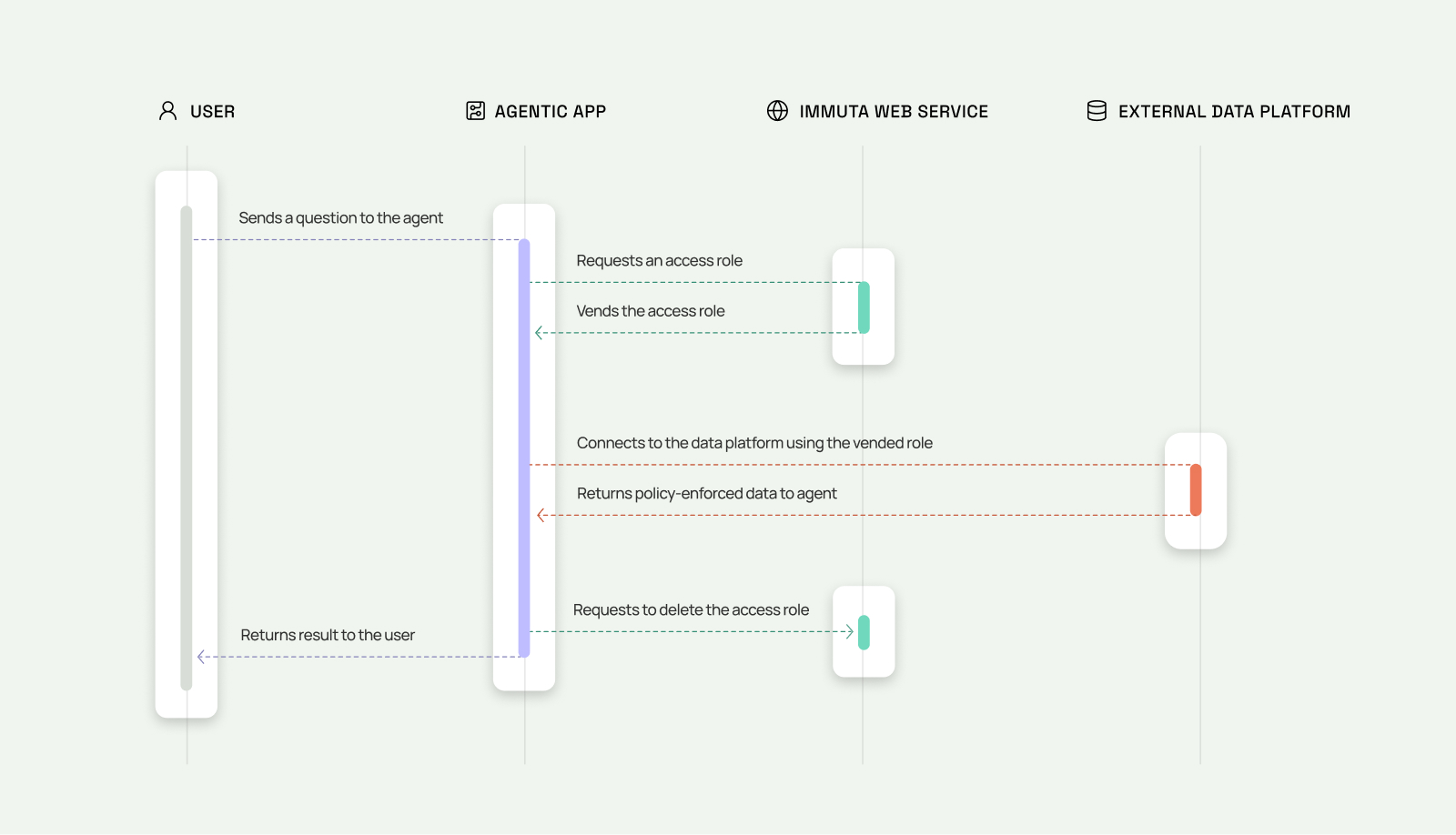

The core of this architecture is the Ephemeral Role Vending mechanism. This allows agents to borrow a user’s data access in real-time, ensuring Zero Standing Privileges (ZSP).

1. The Synchronous Request

When a user prompts an agent (e.g., in a framework like LangChain), the agent authenticates to the Immuta OBO API. It submits the authenticated user’s unique ID and the target data platform(s). Immuta immediately performs an Identity-to-Policy Mapping to calculate the user’s defined but not standing permissions

2. Deterministic Role Generation

Immuta synchronously returns a unique, traceable role name to the agent, following a strict naming convention: IMMUTA_VENDED_<AGENT_ID>_<USER_ID>_<UUID>. This name alone allows security teams to reconstruct exactly which agent acted for which human during forensic query history audits.

3. Instant Policy Synthesis: Security Without the Overhead

To ensure AI agents can act immediately without the lag of manual provisioning, Immuta uses a process called Instant Policy Synthesis. This creates a temporary trust bridge between the agent and your data platform, with two major advantages:

- The “No Account” Advantage: Because Immuta manages the policy externally, the user does not need a login or a defined account in the data platform (like Snowflake). The agent acts as the secure gateway, and Immuta ensures the user’s corporate access policies are applied to the session automatically.

- The Power of Policy Union: Immuta dynamically blends two sets of permissions into one temporary role:

- The User’s Identity: The agent “inherits” the specific table access, row filters, and column masks that are defined for that human user.

- The Agent’s Toolset: Simultaneously, the role is granted the technical “tools” it needs to function—such as access to LLM analyst tools or specific semantic views—which the end-user doesn’t need to manage or understand.

The Result: Your platform team is freed from the access provisioning bottleneck. The agent gets the high-powered tools it needs to be helpful, but the data it retrieves is strictly locked down by the user’s specific access policy profile. It’s the ultimate “least-privilege” model: the right tools, the right data, only for the duration of the task.

4. Execution and Cleanup

The agent executes the query using the vended role. Once the task is complete or the Time-to-Live (TTL) expires, Immuta automatically drops the role. This ensures there are no ghost accounts or residual permissions left behind for exploitation.

The path forward: Context-aware governance

This architecture is just the first step. By decoupling policy from the platform, Immuta is moving toward Question-Time Access, where agents can request additional access on behalf of users in real-time. This will enable a new era of negotiated access, where security is a smart facilitator rather than a static barrier.

For organizations ready to move AI projects out of the proverbial sandbox and into production, Immuta provides the certainty of security required to scale safely.

Don't let access become your AI bottleneck.

When agents hit a permission wall, your AI initiative stalls. Immuta provisions governed, temporary access for agents, automatically and in real time.